Hypothesis-Driven Product Development: A Complete Implementation Guide

Welcome to this week's edition of Mastering Product! Today, we're diving deep into hypothesis-driven product development- an approach that has revolutionized how the most successful product teams operate. Drawing from my experience at Booking.com and Eneco, I'll share a practical framework that has consistently delivered results, along with templates you can implement immediately.

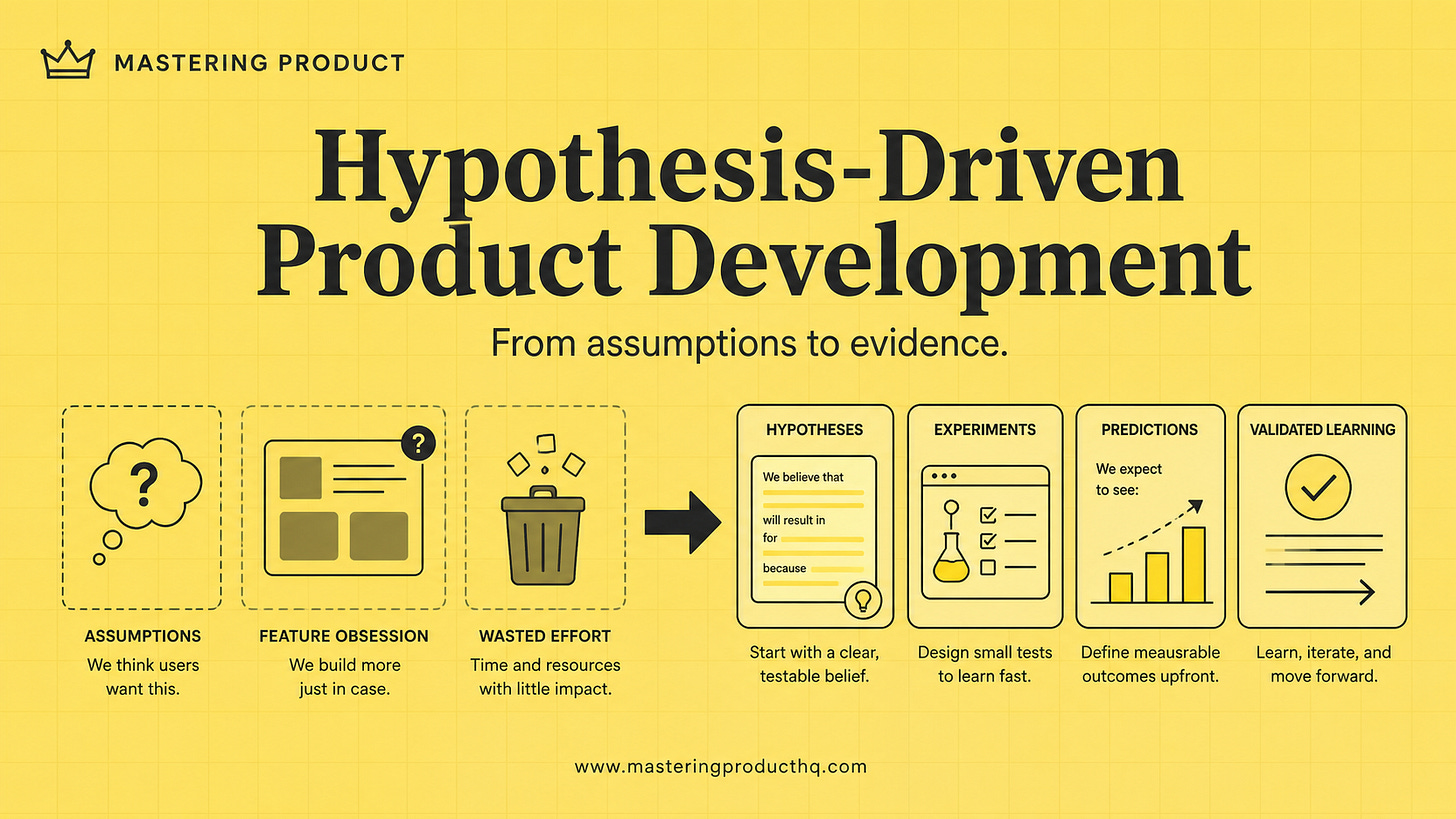

The High Cost of Assumption-Based Product Development

Let's start with a sobering statistic: According to the Product Development and Management Association, 40% of new products fail to deliver their expected ROI. Why? Because traditional product development relies on a dangerous formula:

Untested assumptions + Delayed feedback + Feature obsession = Wasted resources

During my time leading product at Booking.com, I saw millions of euros invested in features that customers never used. At Eneco, entire quarters were spent building functionality that solved problems users didn't actually have.

The root causes of these failures are consistent:

Assumption overload: Requirements based on what we think users want

Delayed validation: Feedback that arrives after significant investment

Output over outcomes: Measuring success by features shipped rather than value delivered

All-or-nothing risk: Addressing all uncertainties at once rather than incrementally

Hypothesis-driven development breaks this cycle by fundamentally changing how we approach product decisions.

The Hypothesis-Driven Mindset: A Transformational Shift

Shifting to hypothesis-driven thinking requires rewiring how product teams think about their work:

Traditional Approach Hypothesis-Driven Approach "We know what users want" "We have informed beliefs about what users might value" "Let's build this feature" "Let's test if this solution addresses a real problem" "Did we ship on time?" "Did we learn what works and what doesn't?" "Success = launching features" "Success = validated learning that drives outcomes"

At its core, this approach treats every product decision as a testable hypothesis. Nothing is assumed; everything is validated through evidence.

The Three Essential Components of Effective Hypotheses

To implement hypothesis-driven development, you need to master three key elements:

1. Problem Hypothesis

A clear problem hypothesis articulates:

Who has the problem (specific user segment)

What the problem is (pain point or job-to-be-done)

Why it matters (impact on users and business)

Example format:

We believe that [user segment] experience [problem] when trying to [achieve goal], which causes [negative impact].

Real-world example from Booking.com:

We believe that business travelers experience frustration when trying to find accommodations that meet their company's policy requirements, which causes them to spend excessive time filtering options and often book out-of-policy stays.

2. Solution Hypothesis

The solution hypothesis specifies:

What solution you propose

How it addresses the problem

What outcomes you expect

Example format:

We believe that by [implementing solution], [user segment] will [expected behavior change], which will result in [business outcome].

Real-world example from Booking.com:

We believe that by implementing a 'Business Travel Mode' with pre-filtered options based on company policies, business travelers will find suitable accommodations 50% faster, which will result in a 15% increase in business bookings and 20% higher compliance with travel policies.

3. Testable Predictions

For each hypothesis, define specific, measurable predictions:

Example format:

If our hypothesis is true, we expect to see [specific, measurable outcome] within [timeframe].

Real-world example from Booking.com:

If our hypothesis is true, we expect to see:

- Average time to booking for business travelers decrease by at least 30%

- Business booking conversion rate increase by at least 10%

- Policy compliance rate increase by at least 15%

- Positive feedback specifically mentioning ease of finding policy-compliant options

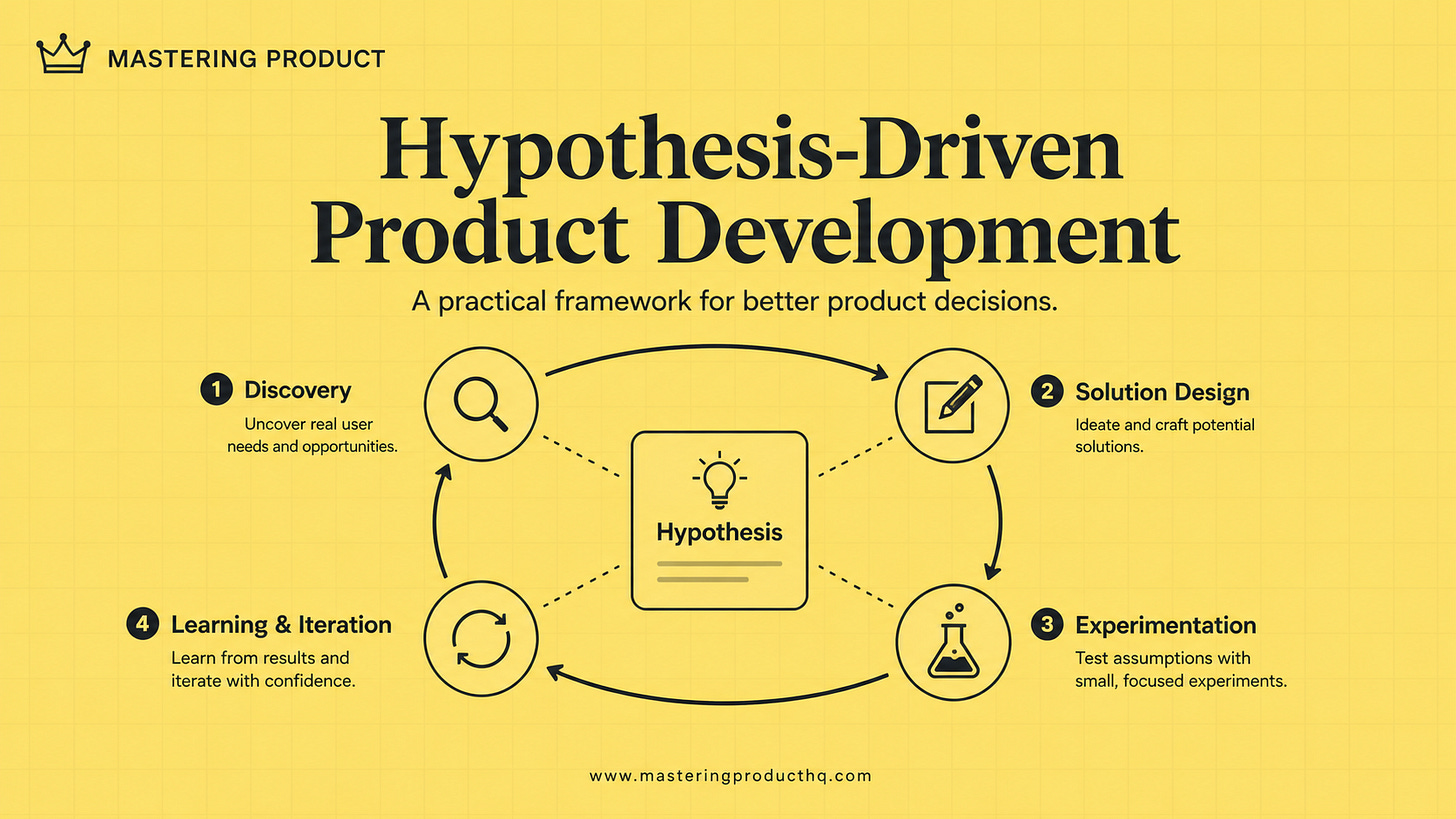

The Hypothesis-Driven Development Cycle: A Practical Framework

Implementing hypothesis-driven development follows this four-phase process:

Phase 1: Discovery

Identify opportunities: Use data, user research, and market insights to identify potential problem spaces

Formulate problem hypotheses: Clearly articulate who has what problem and why it matters

Prioritize hypotheses: Focus on problems with the highest potential impact and learning value

Pro tip: Start with analyzing customer support tickets, user session recordings, and analytics data to identify patterns of user friction points.

Phase 2: Solution Design

Develop solution hypotheses: Propose solutions to address the prioritized problems

Define success metrics: Establish clear, measurable indicators of hypothesis validation

Design minimum viable experiments: Create the smallest possible test to validate your hypotheses

Pro tip: Resist the urge to design the "perfect" solution. Instead, ask: "What's the simplest way to test if we're on the right track?"

Phase 3: Experimentation

Build experiment: Develop the minimum viable product or feature to test your hypothesis

Launch and measure: Release your experiment to users and collect data

Analyze results: Evaluate whether the data supports or refutes your hypotheses

Pro tip: The goal isn't to build a perfect feature—it's to validate your hypothesis with the least amount of effort. Consider non-code experiments first, like mockups, prototypes, or even "Wizard of Oz" tests.

Phase 4: Learning and Iteration

Document learnings: Capture insights regardless of whether hypotheses were validated

Share knowledge: Ensure learnings are accessible across the organization

Refine hypotheses: Use insights to refine existing hypotheses or develop new ones

Pro tip: Create a "learning wall" (physical or digital) where teams can share experiment results and insights, fostering a culture of experimentation.

Implementing Hypothesis-Driven Development in Your Organization

Transitioning to hypothesis-driven development requires changes at both team and organizational levels:

Team Level Implementation

1. Hypothesis Formation Workshops

Conduct regular workshops where teams collaboratively develop and refine hypotheses. Structure these sessions to include:

Review of user research and data

Problem hypothesis formulation

Solution hypothesis brainstorming

Prediction definition and success criteria

Workshop Template:

1. Problem Space Exploration (30 minutes)

- What user problems have we observed?

- What data supports the existence of these problems?

- Which user segments are most affected?

2. Problem Hypothesis Formulation (30 minutes)

- For each potential problem, complete:

"We believe that [user segment] experience [problem] when trying to [achieve goal], which causes [negative impact]."

3. Solution Hypothesis Development (45 minutes)

- For each problem hypothesis, complete:

"We believe that by [implementing solution], [user segment] will [expected behavior change], which will result in [business outcome]."

4. Prediction Definition (30 minutes)

- For each solution hypothesis, define:

- What specific metrics will change?

- By how much?

- In what timeframe?

- What qualitative feedback would validate success?

5. Experiment Design (45 minutes)

- What is the minimum viable experiment to test this hypothesis?

- What resources are required?

- What risks need to be mitigated?

- How will we measure results?

2. Hypothesis Tracking System

Implement a system to track hypotheses throughout their lifecycle. This can be as simple as a shared spreadsheet or as sophisticated as a dedicated tool. Key elements to track:

Hypothesis ID and description

Current status (proposed, in testing, validated, invalidated)

Key metrics and targets

Actual results

Learnings and next steps

3. Experiment Review Meetings

Hold bi-weekly meetings to review experiment results and extract learnings:

Present data against predictions

Discuss whether hypotheses were validated or invalidated

Capture key insights

Determine next steps based on learnings

Organization Level Implementation

1. Outcome-Based Planning

Shift planning processes from feature-based roadmaps to outcome-based roadmaps:

Define clear business and user outcomes

Allow teams to develop hypotheses about how to achieve those outcomes

Measure success by outcomes achieved, not features shipped

2. Learning-Oriented Culture

Foster a culture that values learning over being right:

Celebrate invalidated hypotheses as valuable learning

Share learnings across teams through showcases and documentation

Recognize and reward evidence-based decision making

3. Capability Building

Develop key capabilities across the organization:

Train teams in hypothesis formation and experimental design

Build data literacy to enable hypothesis validation

Develop rapid prototyping skills to enable quick experimentation

Common Pitfalls and How to Avoid Them

1. Hypothesis Formulation Pitfalls

Pitfall: Vague, untestable hypotheses that don't lead to clear experiments.

Solution: Use the SMART framework for hypothesis creation:

Specific: Clearly define the user segment, problem, and proposed solution

Measurable: Include concrete metrics that will validate or invalidate the hypothesis

Achievable: Ensure the hypothesis can be tested with available resources

Relevant: Connect the hypothesis to important user or business outcomes

Time-bound: Specify when you expect to see results

2. Experiment Design Pitfalls

Pitfall: Over-engineered experiments that take too long to deliver learnings.

Solution: Follow the Minimum Viable Experiment (MVE) approach:

What's the smallest experiment that could validate or invalidate your hypothesis?

Can you test with a prototype before building the real thing?

Can you limit the test to a small user segment?

3. Analysis Pitfalls

Pitfall: Confirmation bias leading teams to interpret results in ways that support their existing beliefs.

Solution: Implement structured analysis processes:

Define success criteria before seeing results

Have neutral parties review data and conclusions

Actively look for evidence that contradicts your hypothesis

4. Learning Capture Pitfalls

Pitfall: Learnings get lost or aren't shared across the organization.

Solution: Create a systematic learning repository:

Document all hypotheses and results in a central location

Hold regular knowledge-sharing sessions

Create learning summaries that distill key insights

Case Study: Hypothesis-Driven Development at Eneco

When I was leading product at Eneco.com, we transformed our approach to developing energy management solutions by implementing hypothesis-driven development. Here's how we applied this methodology to create a successful new feature:

Initial Problem Hypothesis

"We believe that residential solar panel owners experience anxiety about whether their investment is performing as expected, which causes them to frequently check multiple systems and still feel uncertain about performance."

Research Validation

Through user interviews and data analysis, we confirmed that:

78% of solar panel owners checked their production data at least 3 times per week

64% expressed uncertainty about whether their system was performing optimally

42% had contacted customer support with concerns about system performance

Solution Hypothesis

"We believe that by implementing a 'Performance Health Score' that compares actual production to expected production (based on weather, panel type, and installation specifics), solar panel owners will feel more confident about their system's performance, which will result in higher satisfaction scores and reduced support contacts."

Minimum Viable Experiment

Rather than building a full feature, we:

Created a simple algorithm to calculate expected vs. actual production

Generated weekly performance reports for a test group of 500 customers

Sent these reports via email for 4 weeks

Measured satisfaction, support contacts, and engagement

Results

The experiment validated our hypothesis:

Customer satisfaction among the test group increased by 26%

Support contacts decreased by 31%

89% of test users reported feeling more confident about their system's performance

Iteration

Based on these learnings, we:

Refined the algorithm to provide daily rather than weekly updates

Integrated the feature directly into our mobile app

Added actionable recommendations when performance was below expectations

The final feature, launched to all customers, resulted in a 22% increase in overall customer satisfaction and a 28% reduction in support costs.

Practical Templates for Hypothesis-Driven Development

To help you implement hypothesis-driven development in your organization, I've created several templates you can adapt to your needs:

1. Hypothesis Formation Template

Problem Hypothesis:

We believe that [user segment] experience [problem] when trying to [achieve goal], which causes [negative impact].

Evidence for this problem:

- [Data point 1]

- [Data point 2]

- [User research insight]

Solution Hypothesis:

We believe that by [implementing solution], [user segment] will [expected behavior change], which will result in [business outcome].

Testable Predictions:

If our hypothesis is true, we expect to see:

- [Metric 1] change by [amount] within [timeframe]

- [Metric 2] change by [amount] within [timeframe]

- [Qualitative feedback] from users

2. Experiment Design Canvas

Hypothesis Summary:

[Brief description of problem and solution hypothesis]

Experiment Goal:

[What specific learning are we seeking?]

Minimum Viable Experiment:

[Description of the smallest possible experiment to test the hypothesis]

User Segment:

[Who will participate in the experiment?]

Success Metrics:

[Quantitative and qualitative measures that will validate or invalidate the hypothesis]

Timeline:

- Experiment build: [dates]

- Experiment launch: [date]

- Data collection: [dates]

- Analysis: [dates]

Resources Required:

[Team members, tools, and other resources needed]

Risks and Mitigations:

[Potential risks and how they'll be addressed]

3. Experiment Results Template

Hypothesis Tested:

[Restate the hypothesis]

Experiment Design:

[Brief description of how the experiment was conducted]

Results:

- [Metric 1]: [Actual result] vs. [Prediction]

- [Metric 2]: [Actual result] vs. [Prediction]

- [Qualitative feedback summary]

Hypothesis Status:

[Validated / Partially Validated / Invalidated]

Key Learnings:

- [Learning 1]

- [Learning 2]

- [Learning 3]

Next Steps:

- [Action 1]

- [Action 2]

- [Action 3]

Key Takeaways: The Competitive Advantage of Hypothesis-Driven Development

Reduce risk through validated learning: Test assumptions early and often to avoid costly mistakes

Accelerate time-to-value: Focus on learning quickly, not building perfectly

Optimize resource allocation: Invest in solutions with proven impact

Build a culture of evidence-based decisions: Rely on data, not opinions

Evolve continuously: Adapt to changing user needs and market conditions

In today's rapidly changing market, the ability to learn and adapt quickly is perhaps the greatest competitive advantage a product team can have. Hypothesis-driven development provides a structured approach to continuous learning that transforms not just your products, but your entire organization.

As you begin implementing hypothesis-driven development, remember that the methodology itself should be treated as a hypothesis: start small, experiment with the approach, measure results, and refine based on what you learn. The journey toward becoming a truly hypothesis-driven organization is itself an exercise in continuous learning and improvement.

How are you implementing hypothesis-driven development in your organization? What challenges have you faced? Reply to this email to share your experience, I read every response and often feature reader insights in future newsletters.

Want to get started with hypothesis-driven development? Download my free Hypothesis Testing Template for a ready-to-use tool to implement with your team.