I Encoded 12 Product Frameworks Into an AI Toolkit Any PM Can Use

How I turned 20 years of product management expertise into 12 MCP tools that any AI assistant can use natively.

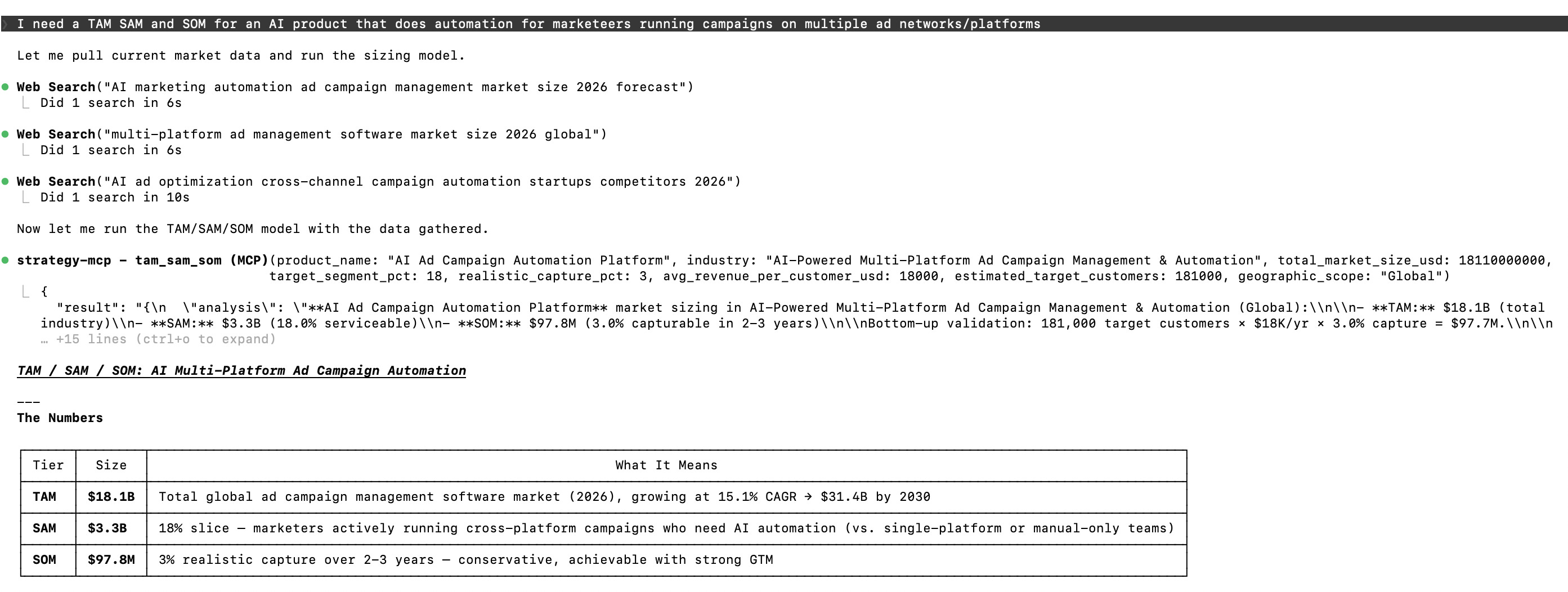

Last week I asked Claude to run a TAM/SAM/SOM analysis for an AI ad automation product. I typed one sentence. Here's what came back.

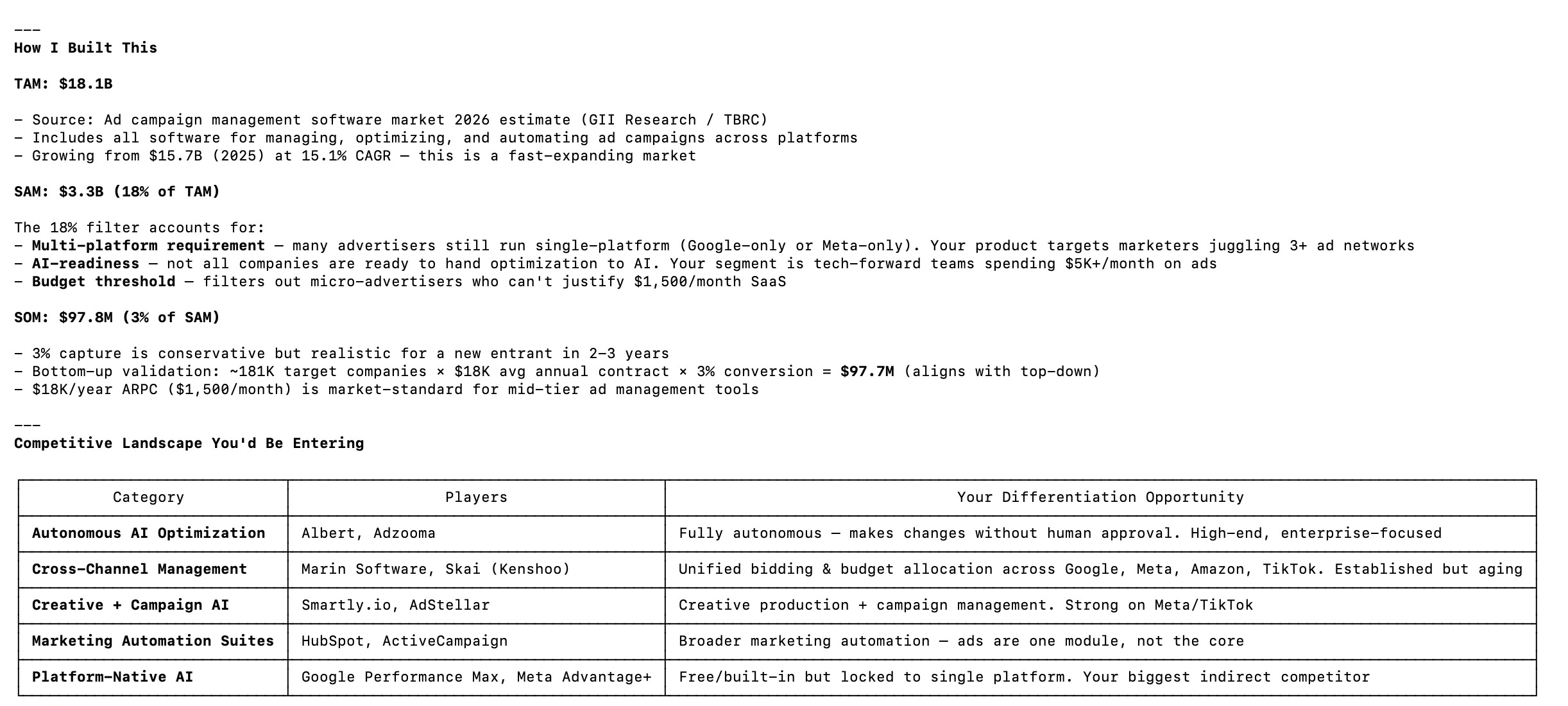

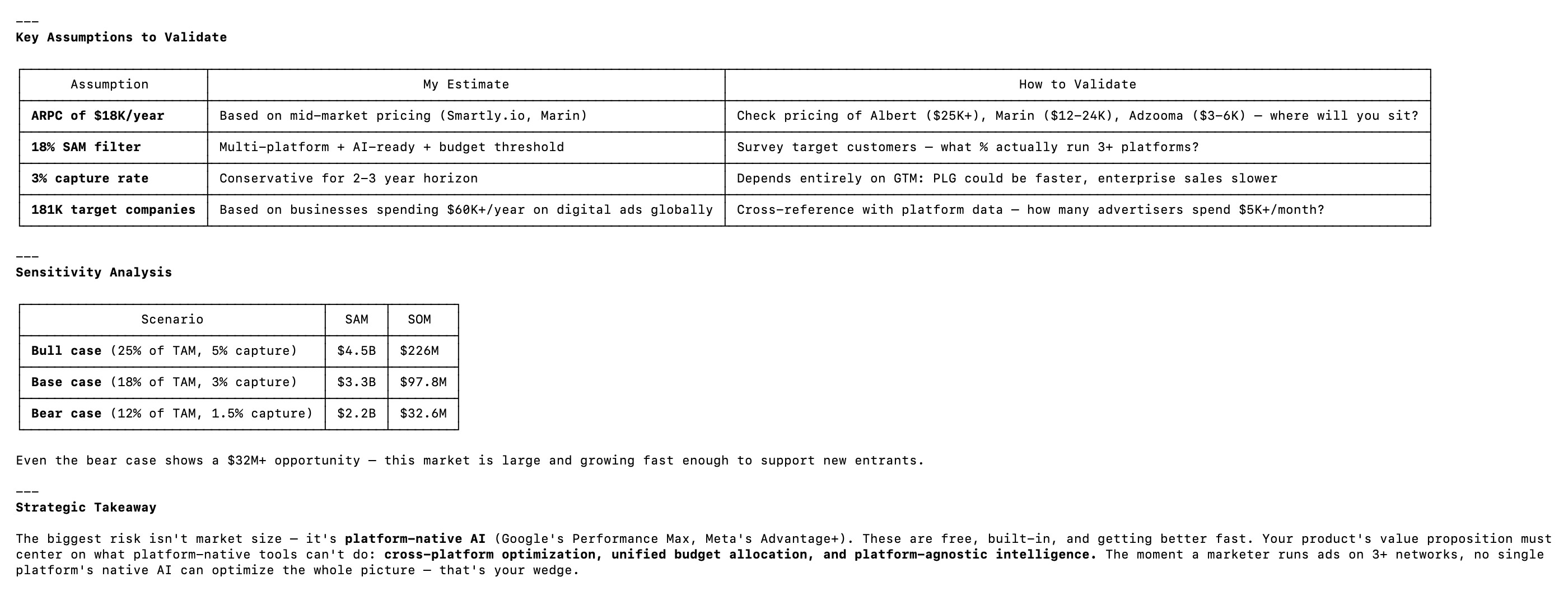

Three web searches for live market data. A $18.1B TAM with sourced growth rates. A competitive landscape table mapping five categories of competitors. A sensitivity analysis with bull, base, and bear cases. An assumptions table with specific validation steps. And a strategic takeaway that identified platform-native AI (Google's Performance Max, Meta's Advantage+) as the real competitive threat.

Not a generic template. Not a ChatGPT-style paragraph that sounds smart but says nothing. A structured strategic analysis with real data, honest confidence levels, and questions designed to pressure-test every assumption.

That output came from strategy-mcp. An open source toolkit I built over the last two weeks. Today I'm shipping it.

Why I Built This

I've spent 20 years using product frameworks. RICE for prioritization. Jobs-to-be-Done for discovery. OKRs for execution. Wardley Maps for strategic positioning. These frameworks aren't optional. They're how good product decisions get made.

When I started building with AI coding tools like Claude Code and Cursor, I realized something frustrating. These tools are brilliant at writing code. They can scaffold an app in minutes. But ask them to apply a product framework and you get a surface-level response. You ask for a RICE score, you get a number with no reasoning. You ask for a competitive analysis, you get a generic 2x2 with no insight.

The frameworks exist. The AI capabilities exist. Nobody had connected them properly.

Then I discovered MCP. It lets you give AI assistants actual tools. Not prompts. Tools. With structured inputs, structured outputs, and built-in domain logic. When I understood what MCP could do, the idea clicked immediately.

I could encode the frameworks I've used for 20 years as tools that any AI assistant can call natively. Not describe them in a prompt. Encode them. With the expert judgment, the pressure-test questions, the follow-up recommendations, and the confidence indicators built in.

So I did. And I decided to do it in public.

I posted a teaser on LinkedIn two weeks ago. Just a short post explaining the idea. Encode product frameworks as MCP tools. Ship them open source. Let any PM use them. It got close to 100 likes and 30 comments. That told me that PMs feel this gap between AI capabilities and structured product thinking. It's not just me.

That early signal created accountability. I had an audience waiting for this to ship. So I built it properly.

What strategy-mcp Actually Does

Every tool in strategy-mcp returns four things. Structured analysis based on your specific inputs. Actionable next steps you can take right now. A confidence indicator that tells you how reliable the output is. And pressure-test questions designed to challenge your assumptions before you commit.

This is not "here's a template, go fill it in." This is "here's the analysis, here's what to do next, and here's how to challenge it."

There are 12 tools grouped across six categories.

Prioritization. RICE scoring that doesn't just calculate a number. It flags when your confidence is low and tells you what to test first.

Discovery. Assumption mapping and Jobs-to-be-Done analysis. These are the steps most teams skip. The tools force you through them.

Positioning. Competitive positioning on custom axes you define. Not another generic SWOT.

Business Model. Business Model Canvas review, TAM/SAM/SOM sizing, and pricing strategy analysis. This is the strategic depth layer.

Execution. OKR generation and initiative scoping. Connecting strategy to the work that actually ships.

Advanced. Wardley assessment, hypothesis builder, and a structured decision log. The expert-level tools that most PMs know about but rarely apply well.

See It In Action

Numbers and descriptions only go so far. Let me show you what this actually looks like when you use it.

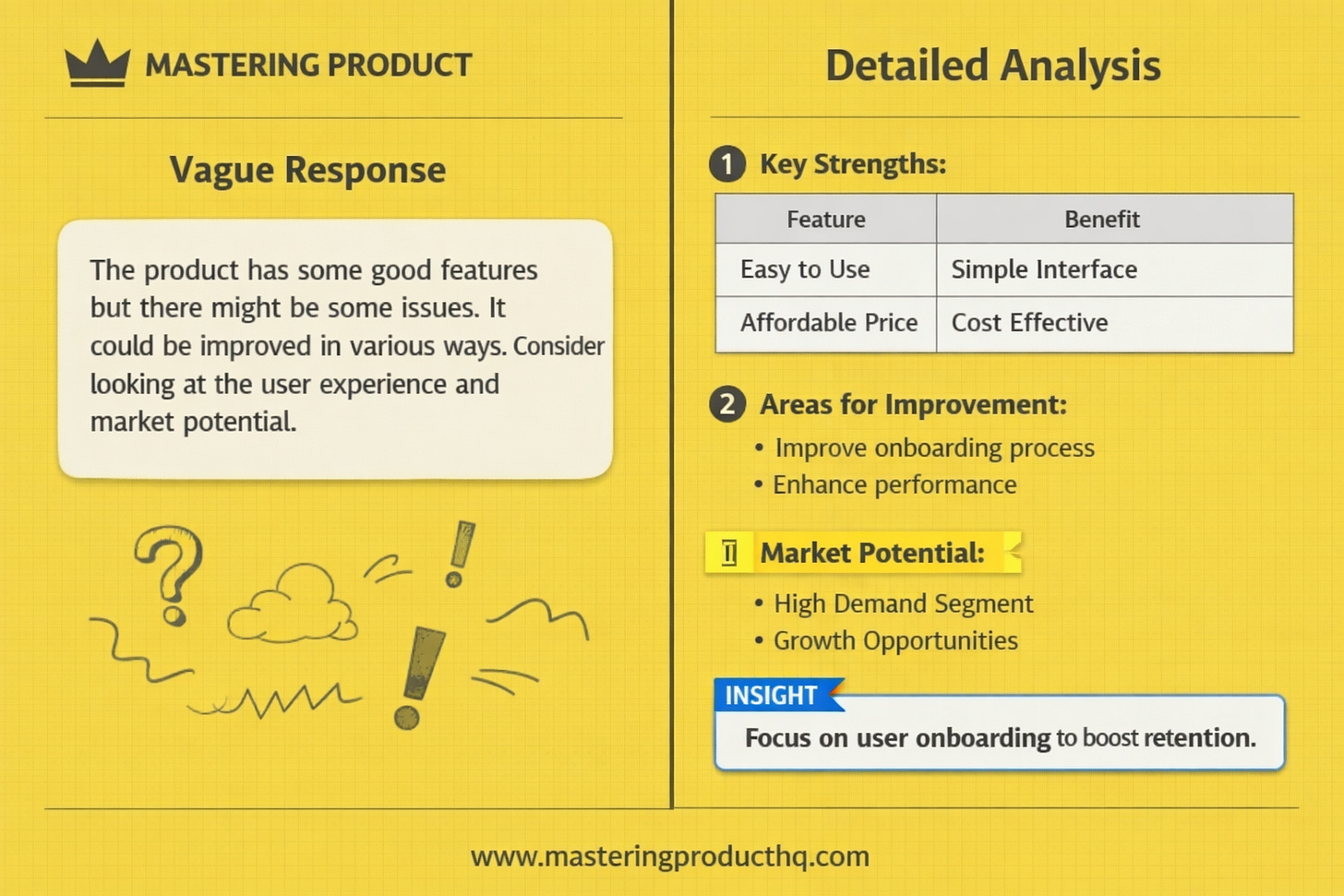

If you asked a standard AI chatbot for a TAM/SAM/SOM, you'd get a paragraph. Maybe some round numbers pulled from training data. No sources. No assumptions table. No way to know if the numbers are current or three years old. You'd spend the next hour verifying everything yourself.

Here's what happened when I typed this into my terminal:

"I need a TAM SAM and SOM for an AI product that does automation for marketeers running campaigns on multiple ad networks."

One sentence. Here's what strategy-mcp did.

That's not a prompt response. That's a structured strategic analysis that would take a PM hours to put together manually. It came back in under a minute.

Every tool in strategy-mcp works this way. The RICE tool doesn't just give you a score. It challenges your confidence levels. The JTBD tool doesn't just list jobs. It maps the push and pull forces driving customer behavior. The hypothesis builder doesn't just format your guess. It tells you whether your hypothesis is actually testable.

The pattern is the same across all 12. Give the tool your context. Get back structured analysis, next steps, confidence levels, and the questions you should be asking.

What I Learned Building It

Three lessons stood out.

Frameworks need opinions, not just structure.

A RICE calculator is easy to build. A RICE tool that tells you "your confidence is low, here's what to test first" is hard. The difference is encoding expert judgment, not just the formula.

Every PM has used a framework and gotten a number that felt wrong but looked right on paper. You plug in high impact and high confidence because you're excited about the feature. The score comes back strong. You ship it. It flops. The framework didn't fail. Your inputs were wrong and nothing challenged them.

The value of a good framework isn't the math. It's the forcing function that makes you question your own inputs. That's what I tried to encode in every tool. Not just "here's your score" but "here's why you should or shouldn't trust it."

Output quality is the product.

In an MCP tool, there's no UI. No dashboard. No drag-and-drop interface. The text output IS the experience. Every word in every response had to earn its place.

I rewrote the RICE tool's output format five times. The first version gave you a score and moved on. Fine, technically correct, completely useless in practice. The second version added a breakdown of each factor. Better, but still just a calculator. The final version walks you through each factor, flags where your estimates are weakest, and suggests what to research before making a decision. It tells you when your reach estimate looks inflated relative to your confidence level. It warns you when effort seems underestimated for the scope you described.

Same framework. Completely different experience. The lesson was that in AI tooling, writing quality is product quality. They're the same thing. If you're building MCP tools, you're a writer whether you realize it or not.

MCP is the distribution layer for expertise.

This was the real insight for me. MCP doesn't just connect AI to tools. It lets domain experts ship their expertise as software.

Think about what that means. I spent 20 years learning when a RICE score is misleading. When a TAM estimate needs a tighter filter. When a hypothesis is testable versus just wishful thinking. That judgment used to live in my head. Now it lives in a tool that any PM can install and use inside any AI conversation.

If you're an expert in anything, you can encode your expertise into an MCP tool. Lawyers could encode contract review frameworks. Designers could encode accessibility audits. Marketers could encode campaign analysis. MCP turns domain expertise into portable, reusable software. That's a big deal.

What's Next

strategy-mcp is open source under MIT license. You can install it today. Contributions are welcome. If there's a framework you use regularly that isn't included, open an issue or submit a PR.

It's also the first of three open source tools I'm shipping in an 8-week build-in-public series.

strategy-mcp helps you think strategically. founder-mode (coming in two weeks) turns Claude Code into an AI co-founder for solo builders. agent-pm connects AI to your execution layer.

Think. Build. Execute. Three tools, one series.

I'll be documenting everything as I go. The decisions, the tradeoffs, the things that broke. If the LinkedIn teaser taught me anything, it's that PMs want to see how these tools get built, not just use the finished product.

Try It Now

Install strategy-mcp with one command. Open Claude Code or any MCP-compatible AI assistant. Ask it to run a RICE analysis on your current feature backlog. Or size a market you've been thinking about. Or map the assumptions behind your latest product bet. Or generate OKRs for next quarter.

Pick the framework you use most. Run it through the tool. Compare the output to what you'd get from a generic AI prompt. The difference will be obvious.

Then tell me what you think. I read every response.

https://github.com/sohaibt/strategy-mcp - more details on how to install in the read me file, you can install it locally using the below terminal commands:

git clone https://github.com/sohaibt/strategy-mcp.git

cd strategy-mcp

uv run python server.pyWhat expertise would you encode as a tool if you could? That question has been stuck in my head since I started this project. I'd love to hear your answer.