The AI-Powered Discovery Sprint: How to Validate Product Ideas in 2 Weeks With Half the Effort

How Modern Product Teams Validate Ideas Before Writing a Single Line of Code and how can AI accelerate the sprint

Welcome to this week's edition of Mastering Product! Today, we're tackling one of the most common traps in product management: spending months building something your customers never asked for. I'm going to walk you through a framework I've used and refined over many years leading product teams, the Discovery Sprint. It's a structured 2-week process that helps teams go from fuzzy opportunity to validated direction with speed and confidence. And with the AI tools available to product teams today, each phase of this sprint can move faster and deeper than ever before. Let's dive in.

The Validation Gap

Here's a scenario I've seen play out too many times: a product team spends an entire quarter building a feature based on a compelling internal thesis, ships it, and then watches the adoption numbers flatline. The post-mortem reveals what everyone suspected but nobody tested, the problem they were solving wasn't the problem users actually had, or it wasn’t as big as they thought it is.

Teams that run structured discovery consistently outperform those that don't, not by a small margin, but by a factor of 3x in terms of features that hit their success metrics. The ones that take two weeks to validate before building save months of wasted development effort.

The root cause is always the same: teams jump from opportunity to solution without a disciplined validation step in between.

The Discovery Sprint fills that gap. It's not a replacement for continuous discovery or long-term research programs. It's a focused, time-boxed intervention you deploy when you need to make a high-stakes product decision and don't yet have sufficient evidence to do so confidently.

What's changed in 2025-2026 is the toolkit. AI has compressed what used to take days into hours, interview synthesis, competitive research, rapid prototyping, even preliminary concept testing. The discipline of the sprint still matters. But the speed at which you can gather and act on evidence has fundamentally shifted.

When to Deploy a Discovery Sprint

Not every decision warrants a 2-week sprint. Use this framework when:

The stakes are high: You're committing engineering resources for a quarter or more

Uncertainty is high: You have strong opinions but weak evidence (Level 1-2 on the Pyramid of Evidence)

Alignment is low: Stakeholders disagree on the problem, the solution, or the priority

The opportunity is ambiguous: Customer signals are mixed or contradictory

A good rule of thumb: if a feature would take more than 6 weeks of engineering effort, it should survive a Discovery Sprint before entering the backlog. This single policy can prevent dozens of wasted initiatives per year.

The Discovery Sprint Framework: 2 Weeks, 5 Phases

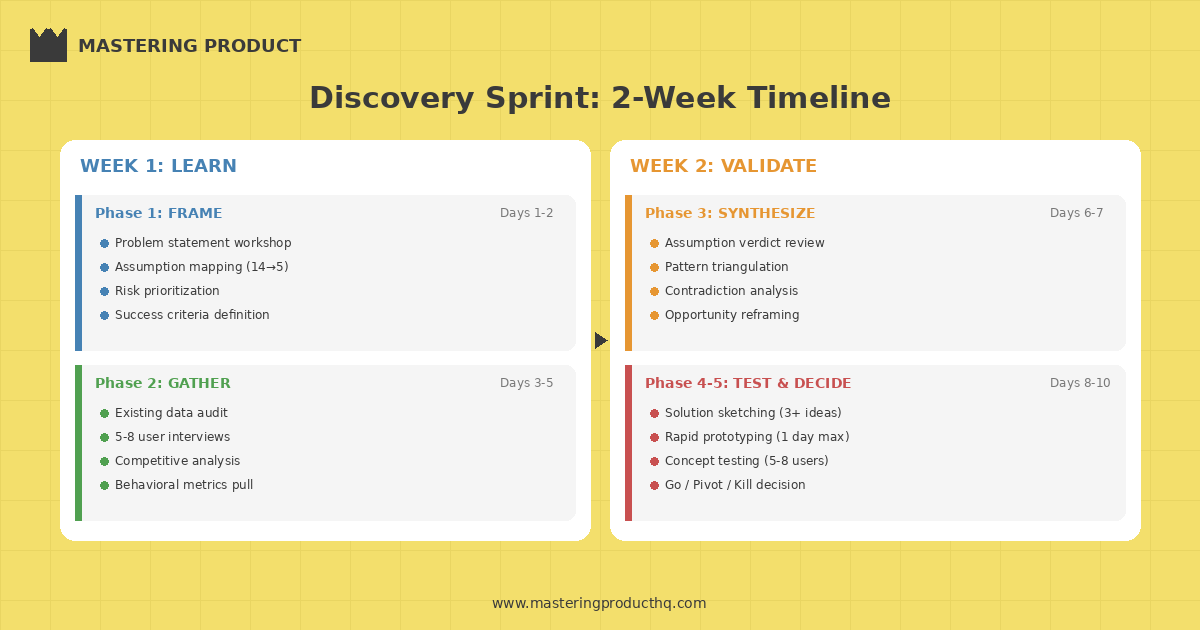

The Discovery Sprint compresses the essential elements of product discovery into a structured 2-week cadence. Each phase builds on the previous one, creating an escalating body of evidence. This 2 week cadence can be reduced drastically with AI, read below to see how.

Phase 1: Frame (Days 1-2)

The first two days are about getting crystal clear on what you're trying to learn—not what you're trying to build.

Key Activities:

Problem Statement Workshop: Bring the product trio (PM, designer, tech lead) together to articulate the problem in a single sentence. Use this template: "We believe [user segment] struggles with [problem] when trying to [goal], which results in [negative outcome]."

Assumption Mapping: List every assumption embedded in your current thinking. Categorize them into:

Desirability assumptions: Do users actually want this?

Viability assumptions: Does this create business value?

Feasibility assumptions: Can we actually build this?

Risk Prioritization: Rank assumptions by two dimensions, how critical they are to the initiative's success, and how little evidence you currently have. The assumptions that score high on both dimensions become your sprint's investigation targets.

Success Criteria Definition: Define what "validated" and "invalidated" look like before you gather any evidence. This prevents post-hoc rationalization.

Output: A Discovery Sprint Brief containing your problem statement, the top 3-5 riskiest assumptions, and clear validation/invalidation criteria for each.

How AI accelerates this phase: Use tools like Miro AI or FigJam AI to facilitate assumption mapping workshops, they can auto-cluster sticky notes, suggest missing assumption categories, and generate initial problem statement drafts from messy brainstorm inputs. You can also feed your product brief into Claude and ask it to play the role of a critical product reviewer: "What are the top 10 assumptions embedded in this product brief that we haven't validated?" You'll be surprised how many blind spots surface in minutes.

Phase 2: Gather (Days 3-5)

With your riskiest assumptions identified, you now go find evidence. This phase uses a multi-method approach because no single evidence source is sufficient.

Key Activities:

Existing Data Audit: Before collecting anything new, mine what you already have analytics data, support tickets, previous research, NPS verbatims, session recordings. You'd be surprised how often the answer is already sitting in your data warehouse.

User Interviews (5-8 participants): Conduct problem-focused interviews. The goal is to understand the user's world, not to pitch your solution. Use the "tell me about the last time..." technique to uncover actual behaviors rather than hypothetical preferences.

Competitive & Analogous Analysis: Study how others have solved similar problems not just direct competitors, but analogous solutions in adjacent domains.

Quantitative Signal Check: Pull relevant behavioral metrics. What are users actually doing today that relates to this problem space?

Output: An Evidence Board organized by assumption, showing what you found across each evidence source.

How AI accelerates this phase: This is where AI tools create the biggest time savings:

Interview analysis: Record your user interviews (with consent) and run the transcripts through Dovetail AI or Grain. These tools auto-tag themes, extract key quotes, and surface patterns across multiple interviews in minutes instead of hours. You can also paste raw transcripts into Claude and ask: "What are the top 5 unmet needs expressed across these interviews, ranked by frequency and emotional intensity?"

Competitive analysis at scale: Use Perplexity or Claude with web search to map how competitors and analogous products solve the problem you're investigating. What used to take a full day of browsing can now be a 30-minute structured analysis. Ask: "How do the top 10 [category] products handle [specific problem]? Compare their approaches in a table."

Support ticket mining: Feed a sample of support tickets or NPS verbatims into an LLM and ask it to categorize complaints, identify recurring themes, and quantify frequency. Tools like MonkeyLearn or simple Claude prompts can process hundreds of tickets in minutes.

Synthetic user validation: Tools like Synthetic Users let you simulate early user reactions to problem statements before you invest time in live interviews. These aren't a replacement for real users—but they're a useful directional signal that can sharpen your interview guide.

Pro Tip: Assign each evidence source a confidence weight using the Pyramid of Evidence levels. An insight supported by behavioral analytics (Level 4) carries more weight than one based solely on user interviews (Level 3), which in turn outweighs competitive analysis (Level 2). AI-generated insights from synthetic users sit at Level 1-2 at best, useful for hypothesis generation, never for validation.

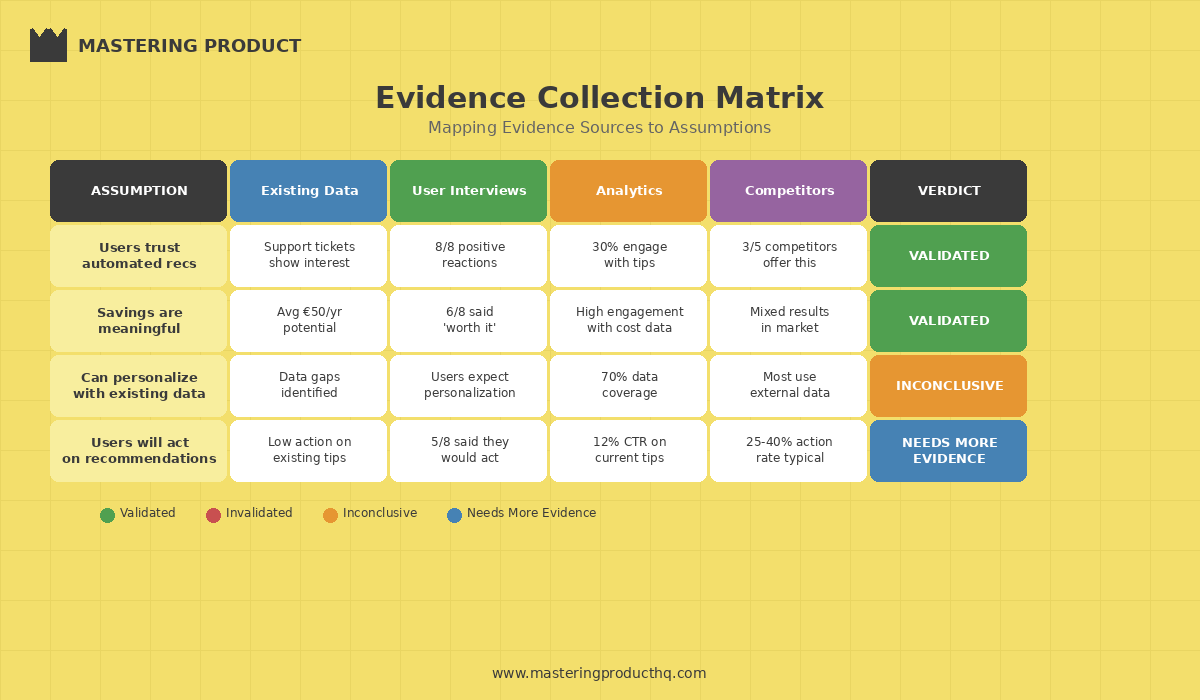

Phase 3: Synthesize (Days 6-7)

This is the most intellectually demanding phase and the one teams most often skip. Gathering evidence without synthesizing it is just data hoarding.

Key Activities:

Assumption Verdict Review: For each of your target assumptions, lay out all gathered evidence and make a call: Validated, Invalidated, or Inconclusive. Apply your pre-defined criteria from Phase 1.

Pattern Triangulation: Look for insights confirmed by multiple evidence types. A finding that shows up in user interviews AND behavioral data AND competitive analysis is far more reliable than one that appears in a single source.

Contradiction Analysis: Pay special attention to evidence that conflicts. These contradictions often reveal the most interesting insights. A classic example: users say they want more options (qualitative) but behavioral data shows that adding options reduces conversion. The synthesis often reveals that users want better defaults, not more controls.

Opportunity Reframing: Based on what you've learned, reframe the original problem statement. It's common for the real opportunity to be adjacent to but different from where you started.

Output: A Synthesis Document with assumption verdicts, key insights, and an updated problem statement.

How AI accelerates this phase: Feed all your evidence (interview summaries, data findings, competitive analysis) into Claude or ChatGPT as a single context and ask it to identify contradictions, patterns, and gaps. Prompt example: "Here is evidence from 3 sources about [assumption]. Source 1 suggests X, Source 2 suggests Y, Source 3 suggests Z. Where do these converge? Where do they contradict? What's the most likely truth?"

A critical warning here: AI is excellent at pattern-matching across large bodies of evidence. It is terrible at judgment calls. The synthesis tool should surface patterns and contradictions—but the product trio makes the verdict. Never outsource assumption verdicts to an AI. The team's contextual understanding of the business, users, and technical constraints is irreplaceable.

Phase 4: Prototype & Test (Days 8-10)

Now and only now do you start exploring solutions. This phase is about testing direction, not building product.

Key Activities:

Solution Sketching: Generate multiple solution concepts. Aim for at least three distinct approaches. Involve the full product trio to ensure diversity of thinking.

Rapid Prototyping: Build lightweight prototypes of the top 1-2 concepts. These should be the minimum fidelity needed to test your key assumption, sometimes that's a clickable mockup, sometimes it's a spreadsheet, sometimes it's a concierge version you run manually.

Concept Testing (5-8 participants): Put prototypes in front of users and observe. Focus on:

Does the user understand what this is?

Does it address the problem they actually have?

Would they use this in their real workflow?

What's missing or confusing?

Feasibility Check: Have your tech lead assess the top concept's technical complexity, dependencies, and risks.

Output: Tested prototype(s) with user feedback, a feasibility assessment, and a recommended direction.

How AI accelerates this phase: This is where the 2025 AI toolkit has been transformative:

From sketch to prototype in hours: Tools like Replit, Bolt, and Lovable can generate working front-end prototypes from text descriptions or rough wireframes. Describe your concept in natural language and get a clickable prototype you can test with real users in a single afternoon instead of 2-3 days of designer/developer time.

AI-assisted design: Figma AI features and plugins can generate UI variations from a single design concept, helping you explore multiple directions without starting from scratch each time.

Unmoderated concept testing: Tools like Maze allow you to set up unmoderated prototype tests that users complete asynchronously. Combined with AI-generated prototypes, you can go from concept to user feedback within 24 hours.

Feasibility estimation: Have your tech lead use AI coding assistants like Cursor or GitHub Copilot to spike on the most uncertain technical components. A few hours of AI-assisted exploration can reveal complexity that would otherwise only surface weeks into development.

The mindset shift: With AI prototyping tools, the bottleneck is no longer "how fast can we build a prototype?" It's "how clearly can we define what to test?" This makes the Frame and Gather phases even more critical. Teams that rush to prototype with AI tools before framing the right question just build the wrong thing faster.

Phase 5: Decide (Days 9-10)

The final phase converts your evidence into a decision and a plan.

Key Activities:

Evidence-Based Recommendation: Present a structured recommendation using this format:

| Element | Detail |

|---------|--------|

| **Recommendation** | Proceed / Pivot / Kill |

| **Confidence Level** | High / Medium / Low |

| **Key Evidence** | Top 3 supporting findings |

| **Remaining Risks** | What we still don’t know |

| **Next Steps** | Specific actions with owners and timelines |

Stakeholder Alignment Session: Walk key stakeholders through the evidence journey not just the conclusion. When people see the evidence path, they're far more likely to support the decision, even if it contradicts their initial expectations.

Scope Definition: If proceeding, define the smallest meaningful first release (what I call the "evidence-based MVP") that will generate the next layer of learning.

Measurement Plan: Define the success metrics, tracking approach, and decision points for the build phase.

Output: A Sprint Decision Document and, if proceeding, an evidence-based MVP scope.

How AI accelerates this phase: Use AI to draft your Sprint Decision Document. Feed it the synthesis findings, assumption verdicts, and prototype feedback, and ask it to structure a stakeholder-ready recommendation. Tools like Gamma or Beautiful.ai can turn your findings into a polished presentation deck in minutes. But remember: the recommendation itself must come from the team, not the tool.

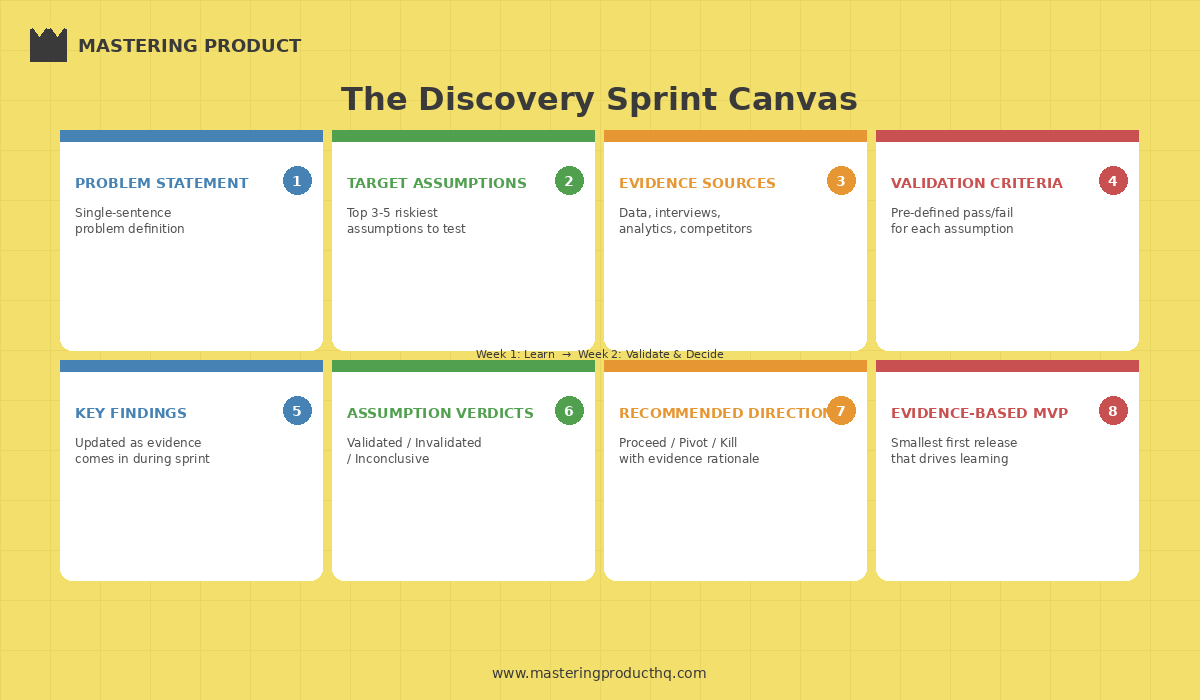

The Discovery Sprint Canvas

To keep the sprint organized, I use a one-page canvas that the team references throughout the two weeks:

| Section | Content |

|---------|---------|

| **Problem Statement** | Single-sentence problem definition |

| **Target Assumptions** | Top 3-5 riskiest assumptions |

| **Evidence Sources** | What data/research you’ll gather |

| **Validation Criteria** | Pre-defined pass/fail for each assumption |

| **Key Findings** | Updated as evidence comes in |

| **Assumption Verdicts** | Validated / Invalidated / Inconclusive |

| **Recommended Direction** | Proceed / Pivot / Kill with rationale |

| **Evidence-Based MVP** | Smallest first release that drives learning |

This canvas serves as both a planning tool and a communication artifact. Pin it in your team's Slack channel or Notion workspace and update it daily. It keeps the sprint focused and transparent.

The AI-Augmented Discovery Stack

Here's a quick reference of AI tools mapped to each sprint phase:

| Phase | Tool | What It Does |

|-------|------|-------------|

| **Frame** | Claude / ChatGPT | Stress-test assumptions, generate blind-spot analysis |

| **Frame** | Miro AI / FigJam AI | Facilitate and cluster brainstorm workshops |

| **Gather** | Dovetail AI / Grain | Auto-analyze interview transcripts |

| **Gather** | Perplexity / Claude | Rapid competitive and market analysis |

| **Gather** | Synthetic Users | Directional signal before live interviews |

| **Synthesize** | Claude / ChatGPT | Cross-reference evidence,surface contradictions |

| **Prototype** | v0 / Bolt / Lovable | Generate working prototypes from descriptions |

| **Prototype** | Cursor / Copilot | Spike on technical feasibility |

| **Test** | Maze | Unmoderated, asynchronous concept testing |

| **Decide** | Gamma / Beautiful.ai | Auto-generate stakeholder presentations |

A word of caution: AI tools accelerate each phase, but they don't replace the thinking that connects them. The sequence Frame before you Gather, Gather before you Synthesize, Synthesize before you Prototype exists for a reason. I've watched teams use AI to prototype in hours, only to discover they were prototyping the wrong thing because they skipped framing. Speed without direction is just expensive chaos.

Common Pitfalls (And How to Avoid Them)

After running dozens of Discovery Sprints across different organizations, I've seen the same mistakes repeatedly:

1. Solution Bias in the Frame Phase

Teams jump to "how might we build X?" instead of "what problem are we solving?" The fix: ban solution language in the first two days. If someone mentions a feature, redirect them to the underlying need.

2. Confirmation Bias in Evidence Gathering

Teams unconsciously seek evidence that supports their preferred direction. The fix: assign a "devil's advocate" role to someone in each interview and data review session. Their job is to actively look for disconfirming evidence. You can also prompt AI with: "Give me the strongest argument against this assumption" LLMs are surprisingly good at playing devil's advocate.

3. Skipping Synthesis

Teams collect evidence and jump straight to prototyping without synthesizing. The fix: make the Synthesis phase a formal, time-boxed session with the full product trio. No prototyping until assumption verdicts are complete.

4. Over-Scoping the Prototype

Teams build too much fidelity into prototypes, wasting time and creating attachment to a specific solution. The fix: set a hard rule if the prototype takes more than one day to build, it's too complex. With AI tools like v0 or Bolt, there's no excuse to spend more than a few hours on a testable concept.

5. Ignoring the "Kill" Decision

Teams treat Discovery Sprints as a validation exercise rather than a genuine learning exercise. The most valuable outcome is sometimes "we should NOT build this." The fix: celebrate kill decisions. A well-evidenced kill can save hundreds of thousands in development costs and free the team to pursue higher-impact opportunities.

6. Over-Trusting AI Outputs

This is a newer pitfall. Teams feed messy data into an LLM, get a confident-sounding synthesis, and treat it as ground truth. AI-generated insights are a starting point for discussion, not a conclusion. Always verify AI-surfaced patterns against your raw evidence. If the AI claims a pattern exists, go check the transcripts yourself.

Measuring Discovery Sprint Effectiveness

To continuously improve your discovery practice, track these metrics:

| Metric | What It Measures | Target |

|--------|-----------------|--------|

| **Assumption Hit Rate** | % of assumptions correctly predicted by the sprint | >70% |

| **Decision Confidence** | Team’s confidence in the go/no-go decision (1-10) | >7 |

| **Build Success Rate** | % of post-sprint builds that hit success metrics | >60% |

| **Time to Decision** | Calendar days from sprint start to decision | ≤10 |

| **Kill Rate** | % of sprints resulting in a kill decision | 20-40% (healthy range) |

If your kill rate is below 20%, you're probably only running sprints on safe bets. If it's above 40%, your opportunity identification process may need attention.

Getting Started: Your First Discovery Sprint

If you've never run a Discovery Sprint before, here's how to start:

Pick a live decision. Choose an upcoming initiative where the team has high conviction but low evidence. This gives you a real scenario with genuine stakes.

Assemble the core trio. You need a PM, a designer, and a tech lead committed for the full two weeks. Other stakeholders participate in specific sessions but don't need to be full-time.

Block the calendar. Protect the sprint time aggressively. The biggest enemy of discovery is "just this one meeting." Two focused weeks beats two months of fragmented effort.

Set up your AI toolkit. Before the sprint starts, make sure the team has access to the tools they'll need an LLM for analysis, a prototyping tool, and a user testing platform. Don't waste Day 1 on procurement.

Set expectations. Be explicit with stakeholders that the sprint may result in a kill decision, and that this is a valid, valuable outcome.

Use the canvas. Pin it in Slack, update it daily. It creates accountability and visibility.

Discovery as a Product Superpower

The best product teams share one trait: they treat validation as a non-negotiable step, not a nice-to-have. The Discovery Sprint makes this practical by giving teams a repeatable, time-boxed process that fits into real-world product development cadences.

Two weeks of structured discovery can save months of building the wrong thing. AI tools make those two weeks dramatically more productive but they don't replace the discipline of asking the right questions first. The teams that discover fast, build with conviction. The teams that skip discovery, build with hope.

Hope is not a product strategy. And in 2026, with the tools available to you, there's no excuse for it.

Have you used AI tools in your product discovery process? What worked and what didn't? Reply to this email to share your experience I read every response and often feature reader insights in future newsletters.

What an interesting read, it’s so relatable especially the Assumptions mapping bits, assumptions that are both highly critical and poorly evidenced represent the greatest risk.

These become the primary focus of your sprint because, if they prove false, they could significantly undermine the initiative.